Company, Technical

31 Aug 2023

The Impact of Large Language Models (LLMs) on the "Build vs Buy Software" Decision: bots buy from bots?

Author

Naré Vardanyan

Co-Founder and CEO

The Build vs Buy Decision

One of the most critical business decisions you have to make has always been whether to build or buy software.

We’ve seen 3 eras of software: from on-prem to cloud and SaaS to the API economy. Now we are living the 4th one, stepping into the land of large language models.

The dynamics of build vs buy are changing as we speak.

💡 At Ntropy we are focusing on turning financial data into a system of record by cleaning, enriching and categorizing transactions across different sources and geographies.

The main competition we face has typically come from in-house software solutions and workarounds.

In-house solutions, custom-built to meet specific business requirements and needs, offer a level of control and customization that off-the-shelf solutions usually can't match.

Building in-house means listening to your customer. However this only matters if what you are building is a part of your core advantage.

For instance, no matter what platform you are using to send SMS to your customers, the value to them is equal. Hence, Twilio is a great enabler for you to use vs building out the infrastructure and the carrier relationships. The unit economics do not make sense unless you are operating at a massive scale either. You clearly do not need to build this in-house.

In the age of API-s, there has been a growing inclination towards buying software vs building in-house. In fact you often encounter companies who can only exist thanks to the API-s they are built on.

Robinhood is an example of a public company that could not go to market if financial data aggregation and account creation API-s were not there. Revenue based lenders like Capchase, Wayflyer and so many others would not be there if accounting and commerce API-s were not readily available.

With LLM-s entering the scene, we wanted to get a pulse check on how software buying is going to be affected this time and what second order effects we should anticipate and prepare for.

Less software developers in the future?

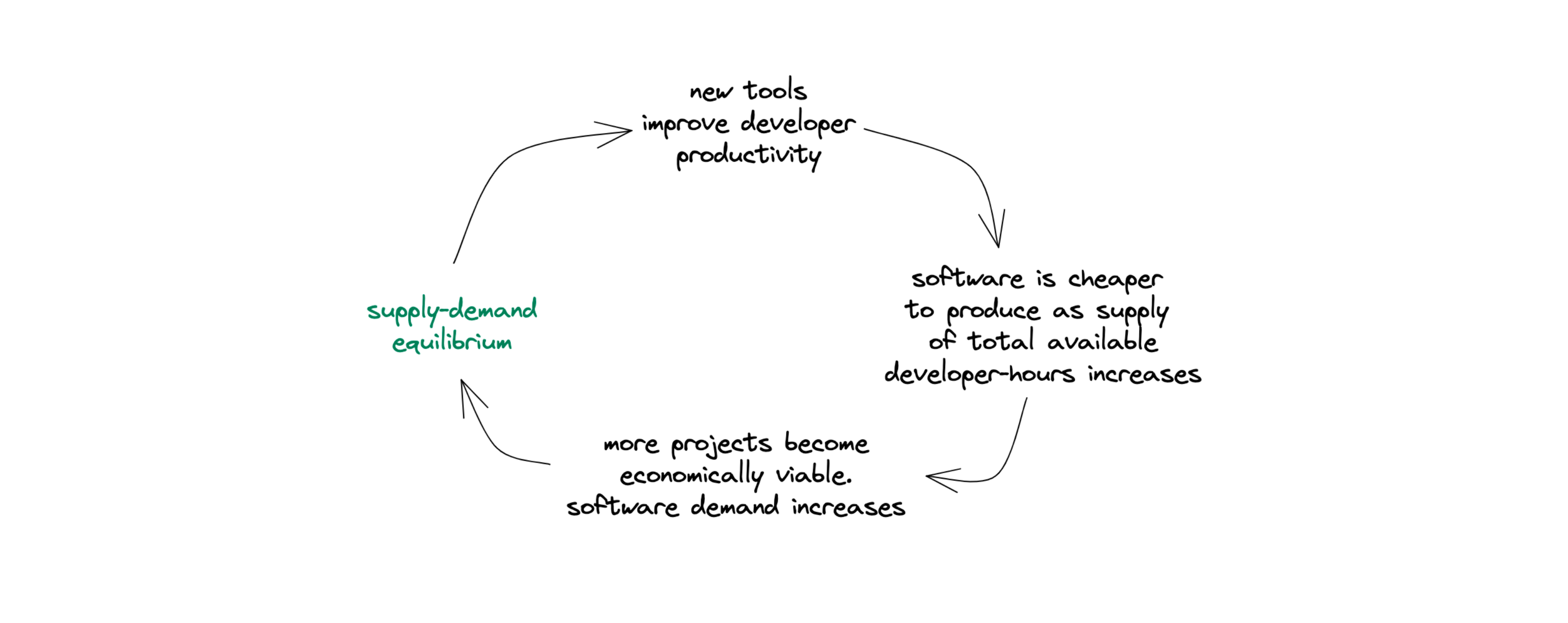

There is general chatter about software developers being less needed with the proliferation of AI, as the productivity of a single developer increases. The latter is true, yet the notion that there is going to be less to build or that we are going to be building the same amount does not make sense.

In fact, we believe the opposite is going to happen.

We will be building more complex things and more people will be entering the scene as software makers.

💡 When you give humans better and more efficient tools, they do not simply work less hours. They are able to build and produce more things.

This has been true historically. The industrial revolution and its affect on workers’ hours, conditions and wealth generation is a great basic example to turn to.

Given AI is changing the game for software development, it will be easier for more workers to be software makers.

“Large Language Models (LLMs) can now write substantial portions of code, provide assistance with debugging, explain functions step-by-step, and recommend system design. We are to turn a billion more people into software makers” according to Replit CEO and co-founder. We are very much onboard with this at Ntropy.

There is going to be more people building and it is going to get easier to build.

Doing more with less

Yet, you cannot deny that as code assistants or co-pilots, LLMs are indeed significantly enhancing the productivity of engineers. By making engineers more productive, LLMs indeed allow companies to build more with less, reducing the need to expand engineering teams and therefore the overall development costs.

We will see more operational leverage per unit of revenue than before..

Pace also means better margins and by itself becomes a strategy: the more you ship, the faster you iterate, the more you can sell. The less time you spend on features, the easier it is to kill them.

In a world where 2-5 people teams can build solutions to command millions in ARR, how do you sell and price your products? How do you even get customers? How long till your margins crush because ot the rising CAC?

The new Customer acquisition game

As more solutions infiltrate the market, they will get quickly commoditized, there will be bigger competition for a single customer, hence a rising CAC is going to become an issue.

But there will also be more companies to sell to since there will be more companies created than it was ever possible before.

The big question in B2B is the following. Are the incumbents going to get 10x more efficient by adding AI or are the new smaller teams prepped to take over verticals fast enough, re-building existing software stacks more efficiently?

We believe data has a key role to play here and the outcome really depends on the specific product and use case.

If your end product is AI native and you can replicate the rest of the stack quickly enough then you can grab the land and win.

However, the bigger picture is the the following. The era of narrow vertical SaaS and point solutions is coming to an end and we are going to see the rise of vertical AI. This means less point solutions, more platform-first plays. You have to expand before you know it, you have to layer products and serve the hierarchy of needs of your customers as picking and procuring 10 vendors for one workflow is not going to be cut it.

What is a point solution though and what are these platforms going to look like?

A point solution is a software wrapper focused on solving a single problem. For example, here is a tool to help you hire people and here is where their applications live.

A platform play follows the oppposite logic: here is your hub for everything employees. From hiring and sourcing, to onboarding, paying, evaluating performance, promoting and off-boarding.

The company that owns the primary data unit and distribution and can quickly turn that data into AI first features, will win

The inevitable rise of Vertical AI

We define Vertical AI as the rise of vertical specific AI powered software solutions bundled around a single primary unit of data. Such platforms are turning the data into a proactive, easily retrievable and actionable system

The first iteration of this is going to be the dominant B2B SaaS platforms adopting LLM-s for certain activities such as analytics, customer service requests and more. We saw this happening shortly after GPT4 announcement.

The second iteration will come in the form of LLM agents performing tasks independently according to certain commands and goals to optimize for.

Companies like Rippling, Intercom, Segment (Twilio) are perfectly positioned here to get the early and lasting wins. They need to show their customers they will be first movers and because of the data, they are in a great position to serve them.

New teams, who can land superior products via AI features and build the rest of the functionality at 10x the pace of what their predecessors have done, have a solid fighting chance too.

In fact sales and customer acquisition is most ripe for automation and we foresee new giant companies rising from the army of the ones trying to create the sales co-pilot. Pylon, Clay, Apollo and many others are very exciting to watch.

💡 Across industries and use cases, no matter what you are trying to accomplish, for vertical AI , vertical data is king.

What is next for "Build vs Buy"

The "build vs buy software" decision has always been a complex one, influenced by a host of factors such as cost, control, time-to-market, scalability, and compatibility with existing software.

These considerations have evolved over time as technology has advanced, and they continue to do so in the era of LLMs.

Let us go through them one by one

Upfront costs

In the past, the upfront cost of building software could be significantly higher than buying off-the-shelf solutions. However, the equation is changing with the advent of LLMs.

By enhancing productivity and reducing the need for large development teams, LLMs are helping to lower the upfront cost of building software.

While the rise of LLMs is reducing the time and effort required to build software, cost remains a significant factor in the "build vs buy" decision. The costs associated with implementing and maintaining LLMs can be substantial.

These costs can include the upfront investment in the technology, ongoing maintenance costs, and the costs of training staff to use the technology effectively

Even with the productivity gains offered by LLMs, these costs can add up, potentially making off-the-shelf solutions a more cost-effective option for some businesses.

Every dollar spent on buying software is a dollar that can't be spent elsewhere in the business, however.

In contrast, every engineering hour spent not on your core proposition that sells and the future of your company is also a large opportunity cost and adds to your burn.

Control and customization

Salesforce is the company that has won customization in the last couple of decades.

If we look at this example, they have made customization so hard, but possible on the platform, that once you invest in it, you are never going to move away from Salesforce.

Cohorts of Salesforce consultants have spun out making real money on turning Salesforce into what you need it to be.

Albeit expensive and painful, almost no one is investing in their own CRM any more.

Control over the software's features, updates, and maintenance has always been a crucial factor in the "build vs buy" decision. Businesses that opt to build their own software often do so because they want to have complete control over these aspects.

With LLMs, we believe any off-the-shelf software can offer a level of customization and control that was previously unreachable.

We proved this by our experiment with a PFM bot built within a week on top of Ntropy API and GPT4. You could create a million features, fulfilling a million feature requests by merely typing commands into a Discord bot.

Given the above, we believe controlling the data vs features is what takes centre stage in terms of strategy and how companies decide to build vs buy.

Time to market

Time-to-market is another critical consideration. In the past, building software could be a lengthy process, making off-the-shelf solutions more appealing for businesses that needed a quick solution and could not wait to go to market.

However, as we are witnessing an accelerated software development process, there is reduced time-to-market for in-house software potentially tipping the balance in favor of the "build" option.

Deciding what is core to your company and what you should build yourself and what is not, is yet again going to be key here.

Following Alan Kay’s line of thought "Make simple things simple, and complex things possible.” This is even more the case right now.

💡 Way more complex things are going to be possible and the things that were complex before are becoming simpler at an exponential pace.

Scalability

Scalability is a key concern for many businesses. As a business grows and evolves, its software needs to be able to keep up. Custom-built software can be designed with scalability in mind, while off-the-shelf solutions may have limitations in terms scalability.

Before businesses used to think that startup X can support them at a small scale and will help them bring products to market, but once they scale this is going to be a challenge.

Infrastructure wrappers and the whole “infrastructure as a service” movement is changing the game here too. By making it easier to add new features and functionalities, as well as maintain architectural complexity and large code bases, both in-house and off the shelf software will become infinitely scalable.

In the end, things are going to come down to the unit economics. How much is your software buying adding to your bottom line and how much is it enabling in terms of incremental revenue?

Compatibility and integrations with existing systems

Finally, there's the issue of compatibility and integration with existing systems. In the past, integrating new software with existing systems was a difficult and time-consuming process.

LLMs as we know excel in understanding and generating human-like text. This can be utilized to create more intuitive interfaces for software integration.

For example, any professional could potentially describe the integration requirements in natural language, and the LLM could translate that into technical specifications or even code. The API economy is the perfect basis for this, as most of the platforms have open API specs and documentation in English.

Companies like Merge, that are making integrations a breeze and any company who is building centralized hubs of record and data models will thrive in the age of Vertical AI (see above).

Future bets

LLMs are adding new layers of functionality to existing software solutions, enabling the rapid creation of features that were previously impossible or would have taken too long to develop.

With the rise of natural language programming, which often is referred to as prompt engineering, more people, even those who are not quite engineers, will now be able to make software and increase their output.

If you sum these events : engineers being more productive, non-engineers working more like engineers and finally LLM-s allowing for very custom feature layer that would take a lot more work before, the shift in companies' preferences whether to build or to buy is apparent.

Our thesis is the following : In the short-term, selling software will become harder with fierce price competition, many more players driving the prices to the ground. There will be lot of redundancy plays, where a single need is fulfilled by many companies depending on the feature set and costs. This also means bigger teams with large burn have to seriously reconsider their cost structure as usage will fall and CAC will rise.

Off-the shelf software is also going to become more custom, complex and adaptable in order to compete. The easier it is to customize your solution, the less likely you will experience churn. The more gaps you fill, the more likely the customers are going to stick to your product, the more data you have to make it even better for them.

Software agents buying from one another

With the advent of LLMs and the ongoing digitization of business processes, we are moving towards a world where procurement, testing and evaluation of B2B buying decisions is turning from an art into science.

Given how much there is to evaluate and consider, we can foresee a situation where software agents will eventually negotiate and transact with each other, having a set of targets to optimize for.

In this future, acquiring customers will pose newer challenges.

With price competition, most software is increasingly becoming a commodity. Businesses that can improve, optimize and accelerate software acquisition and procurement have the potential to become trillion dollar companies. The Google for B2B is going to be reality.

Conclusions: Build vs Buy Reset

However, the advent of LLMs and AI doesn't just herald a shift in the "build vs buy software" decision. It also signifies a transformation in the broader business landscape, where the rules of engagement are being rewritten. As software development becomes more accessible and off-the-shelf solutions become more sophisticated, sales bots will buy from sales bots.

Procurement 2.0

In this AI-driven future, software procurement will be automated, with bots evaluating relevant factors of software vendor such as features, price, compatibility, scalability, and customer support. The pricing of software will become dynamic, with AI-powered negotiation bots haggling over the best deals, optimizing costs, and driving efficiency

In the beginning, being first to market and maintaining a strong brand and scale of operations will be more critical than ever. The barriers to entry will be lower, but the competition will be fierce. The best brand will rise and take land, right before things shift again. The final reality I am foreseeing is the following.

You send a command to your agent

Command: I need to do x. Find me the best solution given y requirements and dials. Prioritize according to the business KPI-s and needs

The agent goes it and picks the exact stack that you need.

Stack as a service vs software as a service is next up for SaaS.

Trust me, humans are not going to be the ones evaluating that stack.